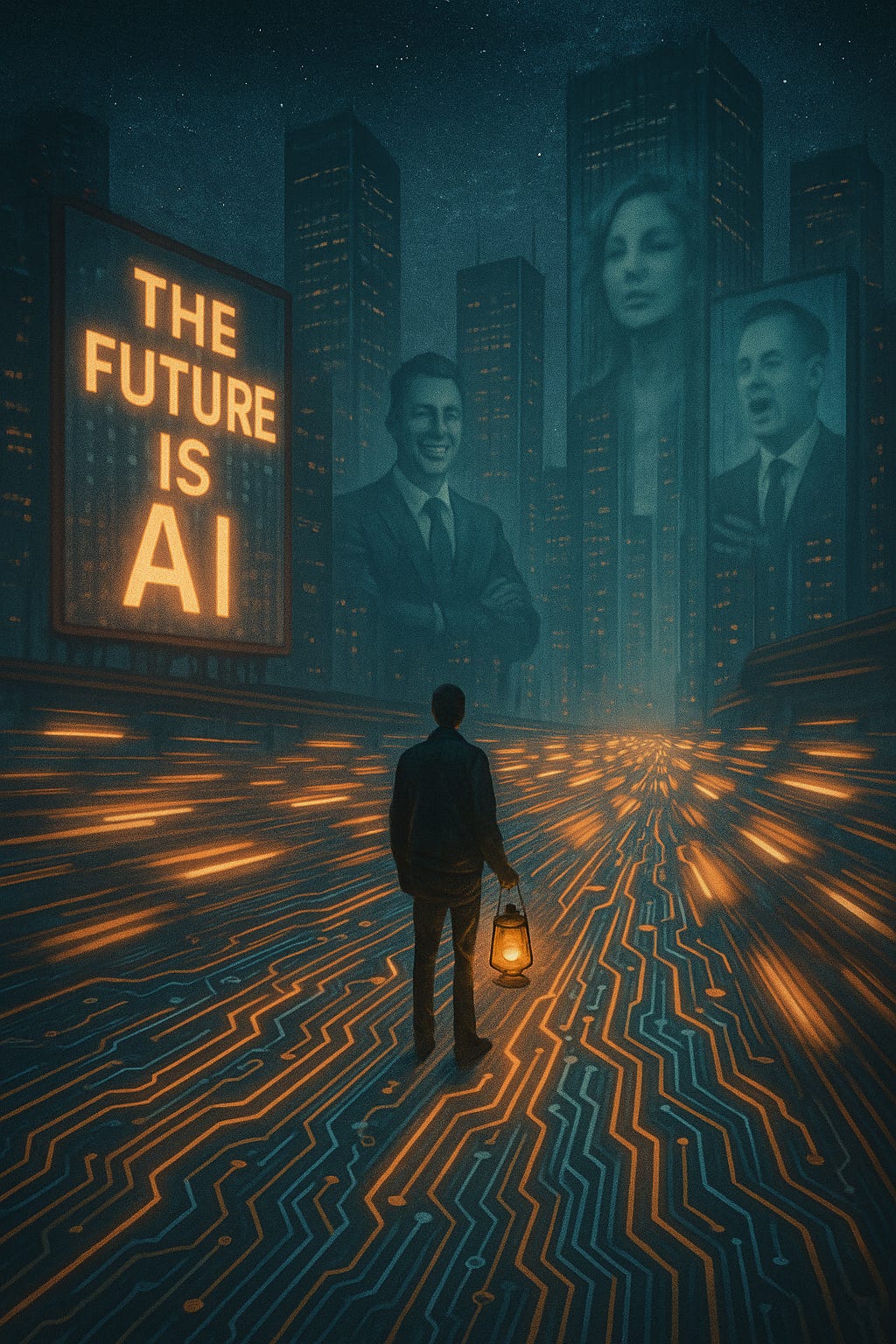

I'm noticing the shifts, AI hype, digital exhaustion, and the leadership mirage

A storm is coming.

I’m worried that we are all moving too fast to see the people we are leading. If you felt that weight today, you aren’t alone. I send out a notes every day to help us stay human in this machine. I’d love to have you in the room with us.

In the rush to adopt AI, many leaders are repeating the mistakes of the Cloud and Web3 eras prioritizing speed over sustainability. True leadership isn't about moving fast; it's about asking who benefits from the rush. This post explores the "Leadership Pause" and how to protect your team from AI hype fatigue and vendor-driven urgency.

I’ve worked in tech for more than twenty years. I’ve seen waves come and go cloud, DevOps, agile, “digital transformation,” all paraded as the revolution that would change everything forever. Each had its merits, but each also carried noise, marketing, and opportunism.

Now it’s 2025, and the wave is called AI.

I can’t scroll LinkedIn without someone declaring AI as the future of work, the savior of industry, the cure for our lack of productivity, creativity, maybe even humanity itself.

CEOs, founders, and consultants chant the mantra:

“Adapt or be left behind.”

Politicians echo it because they see the votes, the economic boost, the geopolitical leverage.

And yet underneath this booming chorus I feel something else moving. A quieter rhythm, more skeptical, less seduced by the glitter. It’s the same rhythm I’ve felt before when we were told cloud would fix everything, when blockchain was going to democratize finance, when Web3 was “inevitable.” It’s the sound of exhaustion, of people tired of hype cycles and disappointed promises.

That’s where I want to pause. To notice the shifts not just in technology but in us: in our energy, in our trust, and in the way leadership is being reshaped or distorted under the glow of AI.

Why I’ve Learned to Pause

One lesson I carry from two decades in tech: rushing rarely serves anyone but the vendors.

I’ve been in rooms where executives made multi-million-dollar bets on platforms they didn’t understand, swept up in glossy presentations from Silicon Valley. I’ve watched governments spend fortunes on software that never delivered. I’ve witnessed teams burn out implementing tools that solved the wrong problems because no one asked the right questions.

So when I see the current AI gold rush, I can’t help but pause.

The story is familiar: adopt quickly, embed deeply, make yourself dependent. Once the system becomes infrastructure, moving away is almost impossible. We saw it with cloud lock-in, with proprietary SaaS ecosystems, with the way social media monopolized attention. Now the same playbook is unfolding with AI.

And the question I keep asking myself and my clients is: who benefits from the rush?

It isn’t workers, whose jobs are destabilized without a safety net. It isn’t small businesses, who can’t compete with enterprise-level adoption. It isn’t citizens, who lose autonomy when public services outsource to algorithms.

The beneficiaries are the same few corporations, whose futures are written into our infrastructure before we’ve had a democratic conversation about alternatives.

That’s why pause is not passivity. Pause is strategy. Pause is noticing the stories being sold, asking who writes them, and deciding whether we want to buy in.

The Seduction of Surrender

One of the most dangerous narratives right now is the invitation to surrender critical thinking.

“No need to study AI will do it.”

“Don’t waste time in university AI can teach you better.”

“Why bother learning AI already knows.”

I’ve heard variations of these lines from professionals, influencers, even some educators. Each time, I get the same knot in my stomach. Because behind the promise of freedom is a trap: the erosion of human curiosity and autonomy.

I coach engineers, managers, and leaders every week. The ones thriving with AI aren’t those outsourcing their minds. They’re the ones using AI as a sparring partner, a thinking aid, a way to challenge or expand their own ideas. They treat AI like a calculator: incredibly powerful, but never a substitute for understanding.

If you don’t know the math, the calculator just gives you numbers you can’t judge. If you don’t know the context, AI just gives you words you can’t trust.

That’s why the most valuable knowledge to invest in today isn’t what AI does well. It’s what AI cannot replicate: the depth of cultural context, the weight of ethical judgment, the mess of history, the nuance of human relationships.

AI will always need human oversight. The question is whether we will have enough wise, skilled, and critical humans left to provide it.

Leadership as Performance

Here’s another shift I’ve been noticing, and it worries me even more than the hype.

The rise of fake leadership.

Everywhere you look, leaders are praised not for integrity, but for performance. Style over substance. Eloquence over honesty. The ability to spin a story about the future, rather than the willingness to sit in the discomfort of the present.

Tech has always been filled with “visionaries.” But the paradox now is sharper: the same people selling us AI utopias keep their own children away from the very tools they promote. They send their kids to schools with no screens, no ChatGPT, no algorithmic babysitters. They carry dumb phones or hire human assistants.

What does that say?

It says they know the risks. It says they’re protecting their families while telling us, “trust the system.” It says leadership, in these cases, is not about stewardship but about influence and control.

And I don’t buy it.

Because in my coaching, I see what real leadership looks like. It isn’t polished. It isn’t always eloquent. It’s someone willing to admit uncertainty, to ask hard questions, to share power instead of hoarding it. It’s a leader who values people, not just productivity.

AI cannot make that kind of leader. In fact, AI hype often distracts us from demanding it.

Digital Exhaustion: The Silent Weight

Let’s talk about something I rarely hear mentioned in AI conversations: the exhaustion.

The emails never stop. The dashboards keep multiplying. Every tool demands attention, updates, integrations. And now AI adds another layer: prompts, fine-tuning, policies, compliance, hallucinations to check.

It’s not that the technology doesn’t help. It does. But it also demands something back our focus, our adaptation, our trust. And the more tools we stack, the thinner we spread our attention.

I’ve coached leaders who are drowning not in work itself, but in the constant recalibration of tools. Every quarter, a new platform. Every project, a new workflow. Every week, a new “must-have” AI plugin. Their teams aren’t resistant to change they’re just tired of being asked to sprint a marathon.

Digital exhaustion is not a personal failing. It’s a systemic consequence of endless disruption, sold as progress. And if leaders don’t recognize it, they risk losing the very people who could help them succeed.

The Blind Spots of AI Leadership

If you want to see where the AI bubble will burst, don’t look at the tech.

Look at the leadership gaps:

Agility & Vision: Many executives don’t actually know what they want AI for. They approve budgets because they don’t want to appear behind, not because they have strategy. Projects stall, not for lack of tech, but for lack of clarity.

AI Literacy & Governance: Few leaders understand the mechanics enough to govern responsibly. Bias is automated, compliance risks multiply, and employees lose trust when they see opaque decisions justified as “the algorithm’s output.”

Scaling Bias: AI doesn’t eliminate human prejudice it multiplies it at scale. Without oversight, organizations end up embedding inequality into every system they deploy.

Change Management: AI isn’t plug-and-play. It changes workflows, fears, identities. If leaders don’t create psychological safety and clear communication, adoption fails.

Lifelong Learning: Skills shift too fast for one-off training. Leaders need to build cultures of continuous learning. Few do.

Human Skills: Empathy, ethics, adaptability these remain irreplaceable. But many leaders neglect them, thinking AI can fill the gap. It can’t.

Ethical Transparency: Every AI rollout raises questions of fairness and accountability. Leaders who ignore this will face backlash legal, reputational, or both.

Business Value: Hype drives adoption, but ROI often disappoints. Real impact requires patience, integration, and metrics beyond cost-cutting.

Talent Gaps: There aren’t enough AI-savvy leaders. Outsourcing to vendors only deepens dependence.

These blind spots aren’t theoretical. I’ve seen them firsthand. I’ve watched companies spend millions chasing efficiency while alienating their people. I’ve seen leaders embrace AI for headlines, then quietly shelve projects when no one could prove value.

The tech won’t kill them. Their own leadership failures will.

The Future Is Still Human

After all this, I keep returning to one truth: the future is still human.

AI is powerful, yes. Like the calculator, like the steam engine, like electricity, it will reshape the way we live and work. But no one compares themselves to a calculator. No one asks a lightbulb for wisdom.

The human brain its creativity, its empathy, its messy moral reasoning is not replaceable. The danger is not that AI will surpass us. The danger is that we forget what makes us valuable in the first place.

When I work with leaders, I don’t coach them to “be more like AI.” I coach them to be more like themselves: clearer, more empathetic, more courageous, more aware of their nervous systems, their biases, their responsibilities.

Because people don’t follow algorithms. They follow people they trust.

The Need for AI Education and Literacy

Based on both experience and observable trends, it is clear that grounding AI education in a blend of statistics, social sciences, ethics, and computer science is not merely an academic exercise but a practical necessity. From working closely with diverse teams in tech environments, it’s evident that those who approach AI with a multidisciplinary mindset are significantly better equipped to face its complexities. They understand that AI's impact reaches far beyond algorithms shaping how we communicate, influence culture, redefine work, and govern societies.

Without this broad education, I have seen professionals and leaders fall into the trap of overreliance on AI outputs, accepting them as unquestionable truths. This reliance often diminishes essential human qualities such as judgment, creativity, and ethical discernment. True leadership today, therefore, isn’t about mastering the latest AI tools alone—it's about cultivating the wisdom to ask probing questions, challenge assumptions, and recognize the social and ethical stakes involved.

From firsthand experience, the most resilient organizations are those that invest consistently in developing this kind of human wisdom alongside technological prowess. These organizations actively foster a culture of lifelong learning and ethical responsibility, which serves as a vital counterbalance to cycles of AI hype and potential marginalization.

In this light, education efforts are not just remedial but transformative: they prepare people to be cautious yet curious, to wield AI as a powerful but accountable partner. The future belongs to those who understand that human and machine collaboration works best when it’s guided by grounded knowledge, reflective judgment, and a commitment to shared values.

My Call to Leaders

There will be an AI bubble. Some startups will disappear.

Big tech will face more regulation. But the deeper shift won’t be about the technology it will be about us.

The leaders who survive won’t be the loudest evangelists or the quickest adopters. They’ll be the ones who:

Invest in human wisdom as much as machine learning.

Build cultures of trust instead of tools of control.

Pause when everyone else is rushing.

Lead with empathy, not just efficiency.

Treat AI as a partner, not a savior.

I tell my clients this: we are not cattle. We don’t have to be herded into infrastructures designed to profit a few at the expense of the many.

We have choices about how we learn, how we lead, how we connect.

The hype will fade. The exhaustion will surface.

The fake leaders will be revealed. And when that happens, what remains will be the same as it ever was: our humanity.

That’s where the real work of leadership lives.

About the Author

Tino Almeida is a tech leader, coach, and writer reshaping how we think about leadership in a burnout-driven world. With over 20 years at the intersection of engineering, DevOps, and team culture, he helps humans lead consciously—from the inside out. When he’s not challenging outdated norms, he’s plotting how to make work more human—one verb at a time.

This is great. Tools help, but they don’t replace the heart behind the work.

Another great article Diamantino. Some excellent advice on 'ethical transparency' and 'trust'. This is foundational leadership behaviour in every organization. Thx.