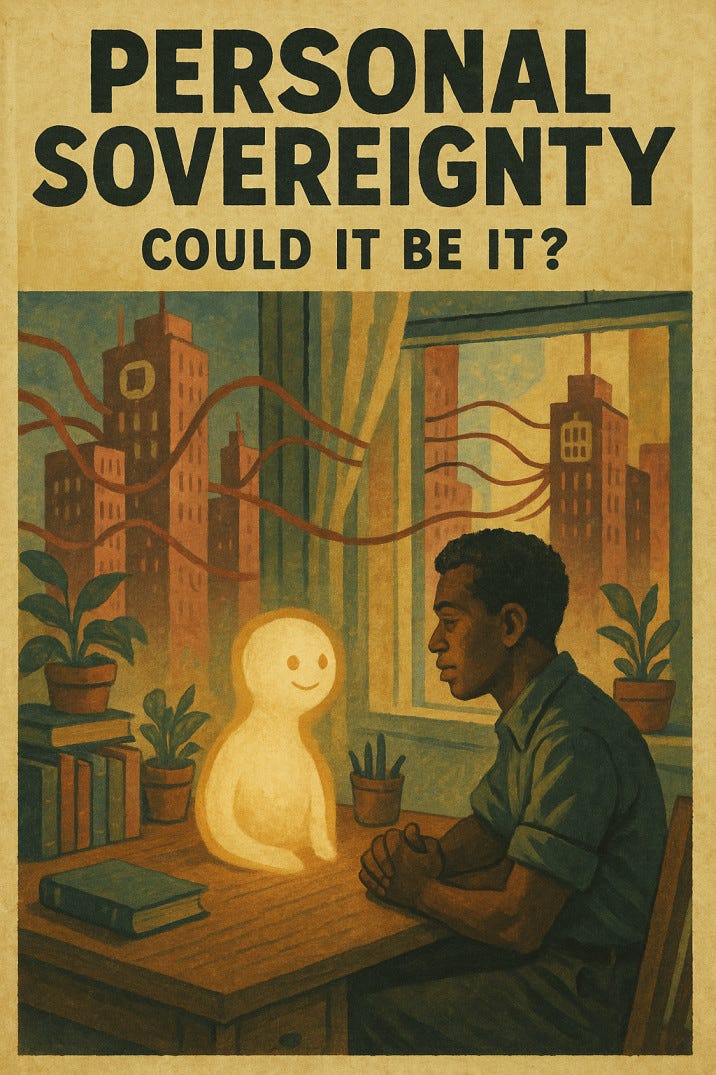

What if the scramble for AI is about avoiding the rise of personal sovereignty?

There’s a version of the future that nobody with a board seat really wants to talk about.

It’s not a dystopia. It’s not even utopian. It’s just a quiet, radical shift.

I pause one day and starting imagining if and when every person has their own AI.

Not a branded assistant or a paid subscription. Not something mediated through an app or platform. But a sovereign, personalised intelligence owned, trained, and run by the individual. Yours grows with you, learns your context, helps you think better, plan better, rest better. It’s not connected to anyone’s cloud unless you want it to be. It doesn’t serve a business model. It serves you.

If that became the norm, the entire structure of today’s mega corporations would be under threat. Not because AI replaces companies outright but because it changes where the power sits. From centralised platforms and massive data warehouses, to something far more distributed and intimate: the individual.

And frankly, I think that’s what this current AI scramble is really about. Not just innovation. Not just competition.

But control. It’s a pre-emptive strike to make sure personal AI stays impossible, or at least too inconvenient to be desirable.

Let me explain.

The New Frontier of Shared Leadership

We often talk about leadership in terms of teams, influence, vision. But in a world shaped by algorithms and invisible platforms, I think we need to start somewhere else: with personal sovereignty.

Because how can you lead others ethically, collaboratively, with depth if your time, attention, and decisions are quietly shaped by systems you don’t control?

Shared leadership isn’t just about flattening hierarchies or empowering others. It starts with reclaiming our own capacity to think clearly, to choose wisely, to act intentionally. And that means having tools that serve us, not the systems we’re trapped inside.

That’s why I believe the future of leadership will be deeply tied to the kind of AI we allow or refuse to build.

If we accept AI as a centralised service, rented from a handful of corporations, we reinforce the very power structures that shared leadership aims to dismantle.

But if we imagine something different sovereign AI, personal AI, co-evolving AI then we open the door to a kind of leadership rooted not in control, but in clarity, context, and agency.

That’s the future I want to explore.

Because maybe the most important leadership work right now isn’t about scaling teams.

Maybe it’s about scaling trust in ourselves.

The Platform Model Is Showing Its Cracks

Every major tech company today is built on a simple idea: aggregation.

They aggregate our attention. Our habits. Our purchasing power. Our data. They don’t necessarily offer the best products they offer the stickiest systems. You go to Google to search, Amazon to shop, LinkedIn to job-hunt, Spotify to listen, YouTube to learn, Microsoft or Apple to work.

Each platform does one thing really well but more importantly, they trap you in their way of doing it. Their UX becomes muscle memory. Your preferences are slowly shaped to fit their models. And they monetise your time, data, and behavior through subscriptions, ads, and proprietary ecosystems.

In this world, you don’t own the tools. You don’t own the outcomes. You rent access to function. Convenience in exchange for control.

It’s worked incredibly well for them.

But personal AI is a glitch in that system, I believe.

When You Become the Platform

If you had an AI that understood your goals, your emotions, your daily rhythms, and your working style one that lived entirely on your device or a private server you controlled you wouldn’t need to jump through a dosen platforms to get things done.

Your AI could:

Summarise your email and help you write replies

Track your mental health patterns and recommend adjustments

Filter your news based on your actual curiosity, not engagement bait

Manage your calendar with deep knowledge of your energy levels

Help your kids learn based on their personality and not some generic curriculum

You wouldn’t need Gmail, Outlook, LinkedIn, Calm, Notion, or half of the productivity stack. Your AI would orchestrate all of that with your logic, your values, and your privacy in mind.

That would absolutely wreck the platform model. Because suddenly, you’re not the product. You’re not even a user. You’re the ecosystem.

AI Doesn’t Have to Mean Centralisation

Right now, most people associate AI with the cloud. You type a prompt, it goes to a server farm, and a large language model spits back a clever paragraph. That model was trained on billions of data points most of it scraped without consent and you interact with it through a branded UI. Microsoft, OpenAI, Google, Meta they’re not offering intelligence. They’re offering access to their intelligence, on their terms.

That’s not a technical necessity. It’s a business decision.

Language models, especially smaller and more efficient ones, can run locally. You can already fine-tune open-source models on your own data. You can run inference on laptops, phones, even Raspberry Pis with the right setup. It’s not science fiction. It’s happening just not at the scale or ease that would make it mainstream.

Because here’s the uncomfortable truth: if everyone had easy access to sovereign AI, the mega corporations would lose their grip. Not gradually. But at scale, and almost silently.

No more tracking. No more behavioral nudges. No more forced subscriptions. No more growth-at-all-costs logic shaping every interface.

Just you, your machine, and the intelligence you build together.

Why the Current AI Race Feels Like a Land Grab

So if personal AI is so powerful, why isn’t it being pushed by the tech giants?

Because it threatens everything they’ve built.

Instead, they’re racing to integrate AI into everything they already control. Microsoft is embedding Copilot into Windows, Office, Teams essentially wrapping its productivity empire in AI just to keep you locked in. Google is doing the same with Gemini inside Android and Gmail. Meta is adding AI personas to Instagram and WhatsApp. Amazon is scrambling to make Alexa useful before it becomes irrelevant.

This isn’t innovation. It’s enclosure.

They’re fencing off access to intelligence. They want to own the interface, the language models, the APIs, the training data. And they want to make sure you never wonder what it would be like to have that power on your own terms.

That’s the part I find most disturbing not just the business strategy, but the quiet assumption that AI should belong to someone else. That intelligence itself must be rented.

Personal AI Isn’t Just a Tool It’s a Relationship

There’s something uniquely powerful about a system that learns with you. Not just about your data or preferences, but your emotions, your contradictions, your aspirations.

An AI that knows when you’re spiraling, when you need quiet, when you’re stuck in a creative rut or making the same mistake again and again not because it’s tracking you like a predator, but because it cares about your evolution.

That might sound like a stretch. But intelligence is always relational. Teachers. Coaches. Therapists. Partners. We grow when we’re mirrored, supported, challenged, and seen.

A sovereign AI could do that. Not replace relationships but deepen them by helping us know ourselves. Imagine being able to surface your patterns over time. To hear back your own language and see how your thinking has evolved. To practice ideas in private before facing public scrutiny.

That’s not a productivity hack. That’s an upgrade in how we live, learn, and lead.

And that’s exactly why it’s not being funded by VCs.

What They Really Fear

They’re not afraid of AI becoming too powerful.

They’re afraid of it becoming too personal.

They fear a world where knowledge is not centralised. Where behavior is not monetised. Where creativity is not filtered through algorithms. Where influence is not dependent on reach or scale.

They fear what happens when people stop logging in and start tuning in.

Because if even a small percentage of people opt out build their own tools, unplug from extractive platforms, and develop sovereign relationships with their own digital companions the existing system loses leverage.

Quietly. Without protest. Just through non-participation.

And when enough people do that, culture changes.

We Need to Reclaim Imagination

Right now, the battle isn’t only technical. It’s cultural. Imaginative.

We’re being sold a future where AI is always someone else’s product. But we need to fight for a future where AI is ours. Not just in terms of ownership, but alignment. Growth. Agency.

This means:

Supporting open-source AI projects and making them accessible to everyday people

Demanding data rights, digital sovereignty, and algorithmic transparency

Teaching others especially young people that AI isn’t magic. It’s math + narrative + power

Creating and sharing our own tools, imperfect but personal

Thinking beyond scale and into depth what kind of intelligence do we actually want to grow?

I don’t want a perfect AI. I want one that evolves with me. That knows my flaws, speaks my language, helps me think more clearly, and doesn’t work for anyone else.

I want tools that help me be more human, not more efficient for someone else’s bottom line.

Who Owns It.

I believe the real revolution isn’t AI itself. It’s who owns it. Who trains it. Who it answers to.

The scramble we’re seeing right now is not about building the best intelligence. It’s about making sure you never get to own one.

But you can. We can.

And the sooner we demand it, the more likely we are to build a world where intelligence isn’t centralised and sold back to us but shared, grown, and deeply personal.

Because in the end, maybe the most radical thing you can do is not to be more productive.

But to be sovereign.

About the Author

Tino Almeida is a tech leader, coach, and writer reshaping how we think about leadership in a burnout-driven world. With over 20 years at the intersection of engineering, DevOps, and team culture, he helps humans lead consciously from the inside out. When he’s not challenging outdated norms, he’s plotting how to make work more human, one verb at a time.

Love the idea of this. I would say that this is something I am exploring....how much good does AI want to suggest toward more human outcomes.

All of the focus on what could go wrong just isn't that helpful or hopeful.

Really got me thinking. Well written, Diamantino