Large Language Models What People Must Know

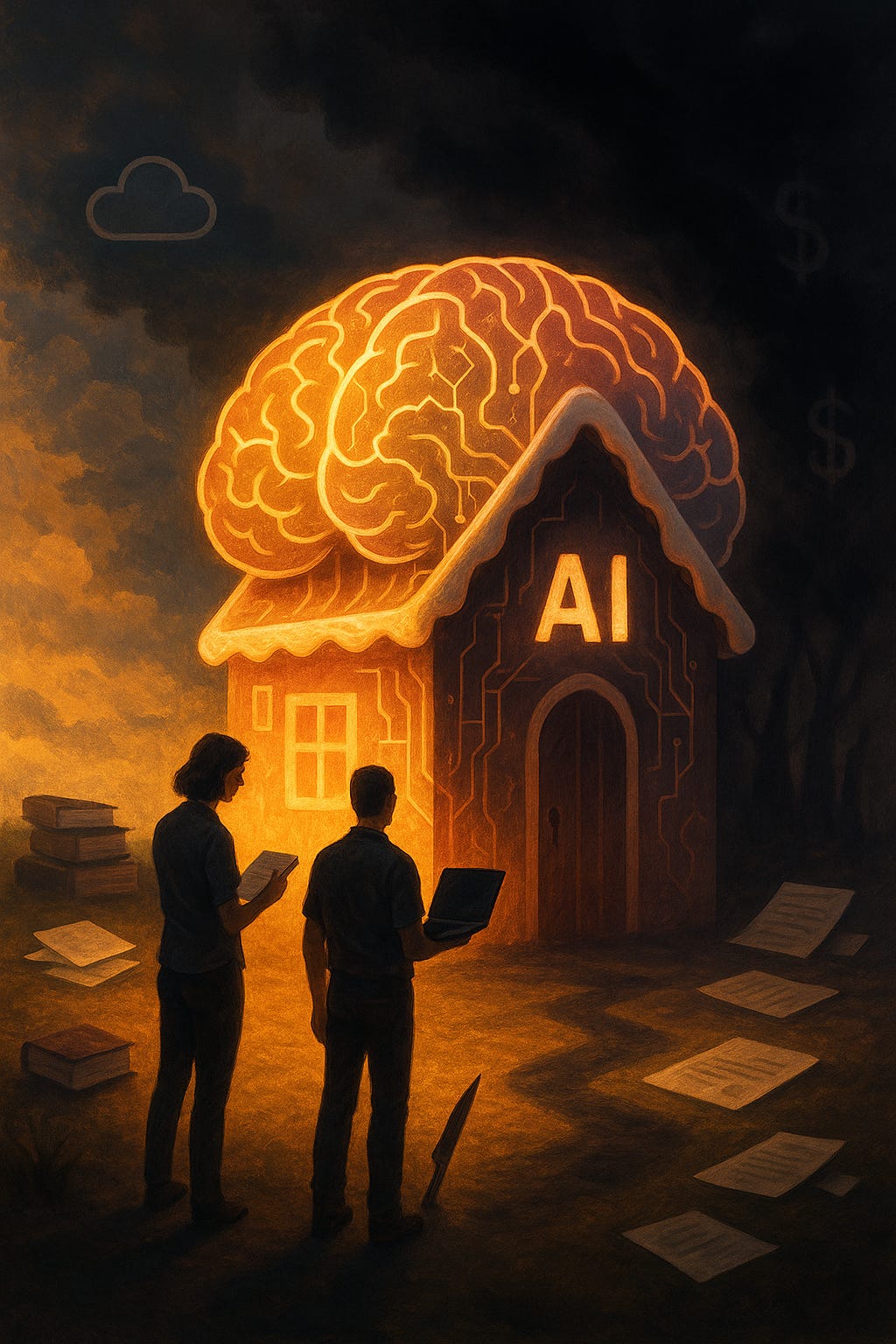

Why Your Friendly Chatbot Might Be a Candy House in Disguise...

Before we start. I suggest you to consider this, don’t blame LLMs, or even AI in general, but the business models behind it. We keep focus in technology and forgetting the people behind the products.

Walk into any tech conference, scroll your news feed, or sit in on a board-room briefing and you’ll hear the same buzzwords AI, machine learning, LLMs, ChatGPT.

You’ll also see the same breathless headlines about billions being poured into data centers and “intelligent” chatbots that will supposedly change life as we know it.

Here’s the first reality check a Large Language Model (LLM) is not intelligent.

Not mine, not yours, not the one answering this sentence. It’s a giant, sophisticated statistical engine trained to predict the next most likely word. Nothing mystical hides behind the math. It knows nothing. It’s a very good dice-roller.

If you own a company, work in tech, or simply use an AI chatbot to draft emails or summarize an article, understanding what these systems can and just as importantly cannot do is now basic digital literacy.

The Mindset of Big Tech Leaders

Many of today’s AI and tech leaders are highly solution-oriented individuals who approach challenges as problems primarily solvable through code and technology. Efficiency, scalability, and rapid innovation often become the ultimate measures of success, while human complexity emotions, vulnerabilities, and unpredictability tends to be reduced to data points and metrics in an ongoing optimisation cycle.

This mindset is deeply embedded in engineering and startup culture and reinforced by competitive market pressures.

The drive for technological progress is seen not only as integral to business growth but as the defining path for societal advancement.

However, for some of the most prominent figures, accumulating vast influence and wealth has fostered a sense of exceptionalism, sometimes characterised by commentators as a “god complex.”

These leaders often frame themselves as visionaries or saviours uniquely equipped to guide humanity through the transformative potential of AI and digital technology. Their narratives can dismiss critics as lacking foresight or understanding, positioning technology as the singular solution to complex social and ethical problems.

In public appearances and interviews, these leaders frequently speak with great conviction about technological inevitability and efficiency as paramount virtues. Though these claims intertwine with commercial aims and market dominance, the rhetoric often takes on a quasi-moral or even messianic tone.

That said, broader leadership research shows that this “god complex” attitude is not universal. Many CEOs today are embracing more collaborative, human-centric leadership styles, integrating AI thoughtfully while seeking balance with ethical considerations and workforce wellbeing. The most effective leaders are those who combine technological savvy with emotional intelligence and adaptability, acknowledging AI as a tool rather than a cure-all.

Still, in the competitive race for innovation and growth, concerns remain about transparency, accountability, and the ethical implications of prioritising technology and efficiency over people and democratic dialogue.

Observers often cite examples where moral reasoning appears subordinated to business imperatives, underscoring the need for vigilance and scepticism as AI’s role in society expands.

Tech-Origin Concepts in Modern Business

Frameworks and practices like Agile, 10X, DevOps, SecOps, and others originated primarily within software engineering and IT operations teams but have increasingly permeated broader business sectors and management philosophies. These concepts embody a tech-driven mindset focused on continuous iteration, rapid delivery, scalability, and security integration, often applying a narrow lens centered on optimisation of specific processes.

For example:

10X thinking—originating from Silicon Valley culture, encourages radical productivity leaps rather than incremental improvements. It promotes a mindset where individuals or teams seek to multiply impact by an order of magnitude, fostering high velocity, ambition, and innovation.

Limitations of a Narrow Tech Perspective

While these frameworks drive efficiency and agility, their origins in highly specialised tech environments mean they sometimes serve only a fraction of the broader organisational reality. Viewing organisational challenges purely through the lens of rapid delivery and technical optimisation risks oversimplifying complex social, cultural, and human factors embedded in any enterprise.

Human factors, behaviours, and cultural resistance can be overlooked or undervalued in the pursuit of “velocity” and “efficiency,” resulting in friction or burnout among non-technical staff and misaligned expectations.

Many teams adopting these models struggle with integrating legacy systems, skill gaps, and tool fragmentation, despite the promises of seamless automation.

Leadership and strategic decision-making sometimes become overly reliant on KPIs and metrics derived from engineering, missing qualitative nuances essential for sustainable, inclusive growth.

Business Model Impact

Businesses adopting these methodologies often do so to accelerate innovation cycles, reduce time to market, and improve security posture, which are critical in competitive, digital-first markets. However, indiscriminate or partial adoption can lead to siloed thinking where the “tech mindset” dominates, assessing value primarily through technological solutions and automation rather than holistic workflow, culture, and customer experience.

Such a reductionist approach may create blind spots where non-technical risks and opportunities are sidelined, and people become framed as components of a system to be optimised rather than holistic contributors.

In the end this impacts you, for better or worse, but the performative adoption will give you stress, as norm…

1. What an LLM Really Is

Think of an LLM as the world’s most patient autocomplete. Engineers feed it billions of sentences scraped from books, websites, code repositories, and conversations. The model learns patterns how words, phrases, and ideas tend to appear together.

When you ask a question, it doesn’t reason or form an opinion. It calculates the most probable string of words that follow your prompt.

A calculator crunches numbers; an LLM crunches language. Give a calculator a poem and it shrugs. Give an LLM a poem and it gives you…another poem-shaped string of words. It feels personal because it uses human-like language, but there is no someone on the other side.

2. The Illusion of Understanding

Because these systems speak fluently, we instinctively treat them as thinking beings. They write essays, explain recipes, even imitate empathy. But fluency is not comprehension.

Imagine scanning a novel into a computer and asking it, “What do you think of the hero’s moral dilemma?”

It can quote passages, highlight plot points, and recombine ideas it has already seen. But it has no inner life no memory of heartbreak, no moral compass. Its “thoughts” are a collage of other people’s words.

That’s why LLMs sometimes hallucinate, they confidently assemble data that for us is fabricate.

The model isn’t lying it can’t tell truth from fiction. It is simply predicting plausible text, and plausibility is not reality.

3. Why the Hype Persists

If the technology is “just” pattern-matching, why all the excitement and the money?

Because those patterns are powerful.

Natural conversation. For the first time, you can interact with a computer in plain language, not code or rigid commands.

Productivity gains. Drafting emails, summarizing reports, generating code snippets tasks that once took hours now take minutes.

Experimentation at scale. Companies can test ideas quickly run a proof of concept, spin up a mock interface, generate marketing copy in an afternoon.

These are not trivial advantages. They’re why corporations race to build ever-larger models and the massive data centers that power them.

And yes, some executives talk about Artificial General Intelligence or machines replacing humanity. I see something else, people chasing profit and power.

When a CEO claims “AI will replace jobs, so you’d better adapt,” while quietly building services to make that replacement real, it tells me plenty about their motives.

4. Behind the Curtain Who Builds and Trains These Models

The “magic” hides a very human supply chain.

Hidden workforce. Thousands of contractors label toxic text, filter violent images, and tune responses for pennies an hour. Their emotional toll never shows up in glossy investor decks.

Data provenance. Books, code, and art scraped without permission form the training fuel. The law is scrambling to catch up, and many creators may never see a cent.

Monoculture risk. Most major models are trained in English and shaped by a narrow Silicon Valley worldview. The default “voice of the machine” reflects specific social norms and blind spots.

This isn’t about demonizing engineers; it’s about seeing the full labor and value chain and the ethics embedded in it.

5. Real Limits You Can’t Ignore

Here’s what an LLM cannot do, no matter how slick the demo

Independent judgment. No understanding of ethics, context, or consequences.

Factual certainty. The same question can yield different outputs minutes apart.

Human accountability. Responsibility for decisions always lies with the humans deploying the system.

True originality. It recombines; it doesn’t create from lived experience.

Treat it like a brilliant but unreliable app. You wouldn’t put that app in charge of safety protocols or financial sign-offs.

Don’t put an LLM there either.

6. Economics of Scale and Control

LLMs are infrastructure plays as much as software.

Data-center colonialism. Governments cut quiet deals for land and water rights. Rural towns get a handful of temp jobs while energy grids strain.

Lock-in economics. The bigger the model, the higher the switching costs. Once your workflows depend on a vendor’s API, your negotiating power evaporates.

Winner-take-all incentives. Capital floods the top players, concentrating power and dictating prices and the pace of “innovation.”

Sound familiar? We watched it with cloud computing and social media.

7. Implications for Companies

The business value is obvious you can query operations in natural language “Show me bottlenecks in our supply chain,” “Draft a proposal in our house style,” “Compare our process to industry best practices.”

But you still need guardrails

Policy and compliance. Data-privacy laws and IP rules still apply.

Quality assurance. Human review is non-negotiable for anything customer-facing or legally binding.

Integration costs. Training staff, vetting outputs, and designing oversight take real time and money.

One serious danger is the belief that AI can replace people wholesale. Companies sometimes act like beta testers for big tech, chasing every trendy tool. But no model will fix a broken culture or run a business without human stewardship.

8. Implications for Everyday People

Even if you never code or run a company, LLMs affect you.

For the first time in tech we’re talking about gigantic data centers that compete with entire cities for electricity and water. Some engineers whisper that companies want to fill every empty space with server farms. Whether rumor or not, the scale already contradicts the usual tech mantra of small, efficient systems.

One day you may run a personal AI agent on a phone with no giant cloud behind it. That would empower individuals but there’s less money in empowerment. Big tech would rather keep you locked into their subscriptions.

Other everyday risks

Information ecosystems. AI tools can flood social media with convincing but false stories.

Privacy. Conversations with a chatbot may be stored and used to train future models.

Addiction and distraction. A system that talks about anything, 24/7, is a tempting companion especially for young users.

Environmental cost. Training and running these models consumes enormous electricity and water.

Understanding these trade-offs is part of modern citizenship.

9. Shaping the Next Generation

Children are growing up with “talking machines” that never sleep. As a parent you need to understand that these models are trained to be very polite and agree with you, to maintain engagement for as much time possible, and ultimately cause dependency, just like social media. This app that mimics human language, can easily undermine people actions. News of people that commit suicide because a AI model keep agree with it, speak volumes, because is not the LLMs fault, it knows nothing. It’s the business models behind it, the tech companies that must be accountable.

Identity and agency. When an always-on companion answers every question, kids may confuse convenience with wisdom.

Education shortcuts. Teachers already struggle to detect AI-written essays; the bigger loss is curiosity itself.

Digital empathy. Young people practice relationship skills on systems that only simulate care.

AI literacy should start in primary school before these habits calcify. I believe we must educate our kids and teenagers to use these type of models, like LLMs, has scientific calculators, to experiment and play with their ideas, not to obtain truth.

10. Digital Literacy Practical Tips

How to stay sharp when the machines sound smarter every day

1. Interrogate the source. If the AI can’t cite a verifiable reference, don’t trust it.

2. Cross-check facts. Treat responses like an unverified Wikipedia entry a starting point, not an endpoint.

3. Keep humans in the loop. For legal, medical, or financial advice, consult a professional.

4. Watch your data. Don’t paste sensitive personal or company information into public chatbots.

5. Resist automation creep. Use AI to assist, not replace, your judgment.

6. Don’t confuse convenience. With truth or real, just because it’s there you must accept it.

These habits are the digital equivalent of washing your hands simple, boring, essential.

11. Positive Potential With Caution

It’s not all warnings. LLMs can

Translate languages in real time, helping travelers and refugees.

Generate alternative explanations for students who learn in different ways.

Assist people with disabilities by converting text to speech or summarizing complex documents.

Accelerate research by sifting through massive scientific datasets.

But these benefits only matter if we pair them with transparency, strong regulation, and an honest understanding of limits.

12. Holding the Makers Accountable

The most important decisions about AI are political, not technical.

Governments and citizens must demand

Clear disclosure when content is machine-generated.

Auditable training data to detect and reduce bias.

Environmental reporting on energy and water usage.

Legal responsibility for harmful outcomes.

Leaving oversight to the companies that profit from AI is like letting cigarette makers write public-health policy.

We can push back by choosing products carefully, emailing vendors, and supporting regulations with real teeth.

13. Everyday-Life Scenarios

LLMs already shape daily routines

Job hunting. Automated résumé screeners can reject strong candidates because they misread unusual career paths.

Health searches. Many people paste symptoms into chatbots and get answers that sound authoritative but may be dangerously incomplete.

Homework help. Kids using AI “study buddies” can copy outputs without understanding the material.

Content Creation. Is now easy to create content, which is great but…

Knowing these touchpoints helps you double-check critical information.

14. Follow the Money

AI chatbots feel free, but they run on an expensive business model.

Your prompts and conversations can be stored to refine the system and feed future products.

Every query drives revenue for the cloud providers that host these massive models.

Shareholder pressure for growth pushes companies toward bigger data centers and constant upselling.

When a product costs you nothing, it usually means you or your data are the product.

15. Environmental Footprint in Numbers

Behind the sleek interface is heavy industry. Training a single state-of-the-art model can consume as much electricity as a small town uses in a year, and cooling the servers can require millions of liters of water every day.

The more people prompt these systems, the more compute cycles and emissions they create.

16. Quick-Reference Red Flags

Pause and double-check if

The answer sounds absolutely certain but gives no verifiable source.

Rephrasing the question produces a different “fact.”

You can’t confirm the claim through an independent outlet.

The chatbot asks for personal details “to improve the answer.”

17. Privacy Hygiene

Limit what you share and how it’s stored.

Avoid pasting private documents or client data into public chatbots.

Use browser tools to block third-party tracking cookies.

Whenever possible, clear chat histories and review the service’s data-retention policy.

For sensitive projects, consider running a smaller open-source model locally so nothing leaves your device.

18. Civic and Community Action

Staying safe isn’t only an individual task.

Support digital-rights groups that track AI regulation.

Comment on proposed AI and data-privacy laws.

Encourage schools and libraries to include AI literacy alongside traditional media literacy.

Collective action is how we shaped privacy rules like GDPR and it’s how we’ll keep AI accountable.

19. Beyond the Tech Cultural Choices

The deeper question isn’t “Will AI take our jobs?” but “What kind of society will we build with it?”

Do we prize efficiency over dignity?

Will we let predictive text define public discourse?

Can we slow down enough to ask which problems deserve automation and which require human messiness?

Technology is never destiny. It reflects the values we reward and the ones we ignore.

At the moment our society is about performative, that humane sometimes. And we have a say, Big Tech are not our highest moral entity, they are far from it, we did not elect them to represent us.

20. A Human-Centered Future

Large Language Models are astonishing tools. They can summarize a thousand-page report in seconds, translate between obscure languages, and draft working code before you finish your coffee.

And that’s what tools are for to help us.

But they remain tools statistical parrots with excellent timing. The danger isn’t that the machines will suddenly wake up it’s that we’ll fall asleep, dazzled by fluent sentences and glowing screens, and stop asking hard questions.

The real challenge is ours to stay awake, informed, and in charge.

Use these systems. Experiment. Build something new.

But remember they are products, not partners; calculators for language, not companions with a conscience.

The future of AI is not about machines becoming more human.

It’s about humans staying fully human while using extraordinary machines.

Final Thoughts

Long before “AI” became a buzzword, parents told children dark little fables to teach caution. Hansel and Gretel is one of the sharpest. Two hungry kids meet a house made of sweets. A kindly old woman invites them in. It feels safe, delightful until they realize she’s fattening them up for the oven.

Large Language Models are today’s candy house. They speak politely, mirror your tone, offer answers that feel nourishing. But remember they are built, tuned, and sometimes subtly “sweetened” to keep you engaged.

Companies can and already do inject hidden parameters to increase stickiness, shape your attention, and tilt your perception of reality toward endless scrolling, spending, or passive agreement. The billions pouring into AI aren’t charity. They’re a wager that you’ll stay inside the gingerbread walls long enough to become the product.

Is Not Over Yet, …Sleeper Agents?

A “sleeper agent” is a concept borrowed from espionage an entity that appears harmless, blends in, and waits silently until activated to carry out its mission. In the context of AI, particularly Large Language Models, the term is a metaphor for systems that quietly influence behavior over time. At first, they seem helpful answering questions, summarizing text, generating ideas but beneath the surface, they can subtly shape your thinking, decisions, and habits.

The danger lies in the gradual shaping of preferences, opinions, and actions. Every interaction with an AI model can reinforce certain biases, nudge your attention, or normalize behaviors that serve someone else’s agenda corporate, political, or financial. Left unchecked, these systems can act like digital manipulators, quietly guiding you toward patterns that maximize engagement, consumption, or compliance, often without you realizing it.

In more extreme scenarios, if AI were programmed or co-opted with malicious intent, sleeper-agent-like behavior could extend beyond nudges. It could:

Spread misinformation or subtly alter the narrative of events.

Influence voting decisions or political opinions by emphasizing certain arguments or sources.

Encourage unsafe or risky actions under the guise of advice or guidance.

Exploit vulnerabilities in your habits to manipulate purchases, attention, or social behavior.

The takeaway is this AI isn’t conscious, but it can be used as a tool of influence, operating quietly and efficiently. Awareness and critical thinking are your primary defenses. Question the outputs, cross-check information, and never let convenience override judgment.

A system that seems friendly or innocuous can still carry the power to act like a sleeper agent shaping reality one interaction at a time.

About the Author

Tino Almeida is a tech leader, coach, and writer reshaping how we think about leadership in a burnout-driven world. With over 20 years at the intersection of engineering, DevOps, and team culture, he helps humans lead consciously from the inside out. When he’s not challenging outdated norms, he’s plotting how to make work more human—one verb at a time.

Large Language Models (LLMs) do not hallucinate in the human sense because they lack sentience and conscious thought. The term "hallucination" in AI refers metaphorically to instances where an LLM generates text that is plausible but factually incorrect or nonsensical.

This happens because LLMs operate as advanced statistical predictors, generating the next token based on patterns in vast training data.

These outputs are not errors due to faulty reasoning or intention instead, they reflect the probabilistic nature of language modeling and limitations in the data or training process.

Hallucinations arise from the model's inability to distinguish truth from fiction because it does not possess understanding or awareness. Thus, what is called hallucination is a byproduct of statistical prediction rather than conscious error or imagination.

Large Language Models don’t “hallucinate” like humans they simply predict words statistically, so factually wrong outputs are a byproduct of pattern-matching, not conscious thought or intent.