The AI in your pocket isn’t what you think it is.

What do I keep telling people?

I’ve been getting a lot of questions lately from customers and coaching clients about what large language models (LLMs) actually are.

Not the marketing spin, not the sci-fi promises the real mechanics and limitations. So I wrote this piece to cut through the noise and share my take, from both my technical background and my day-to-day experience using these tools with teams and individuals. I hope I’m not repeating myself.

Large language models (LLMs) are everywhere now. You see them in ChatGPT, Gemini, Perplexity. They’re writing blog posts, summarizing reports, generating code, drafting contracts, even writing the damn email your boss is about to send you.

People keep asking me: what are these things, really? Are they brains? Are they the first step toward machine intelligence? Or are they just the fanciest autocomplete in history?

After more than twenty years in tech building systems, leading teams, coaching leaders, and now living with these tools daily I can tell you this the hype is loud, but the truth is quieter, messier, and far more interesting.

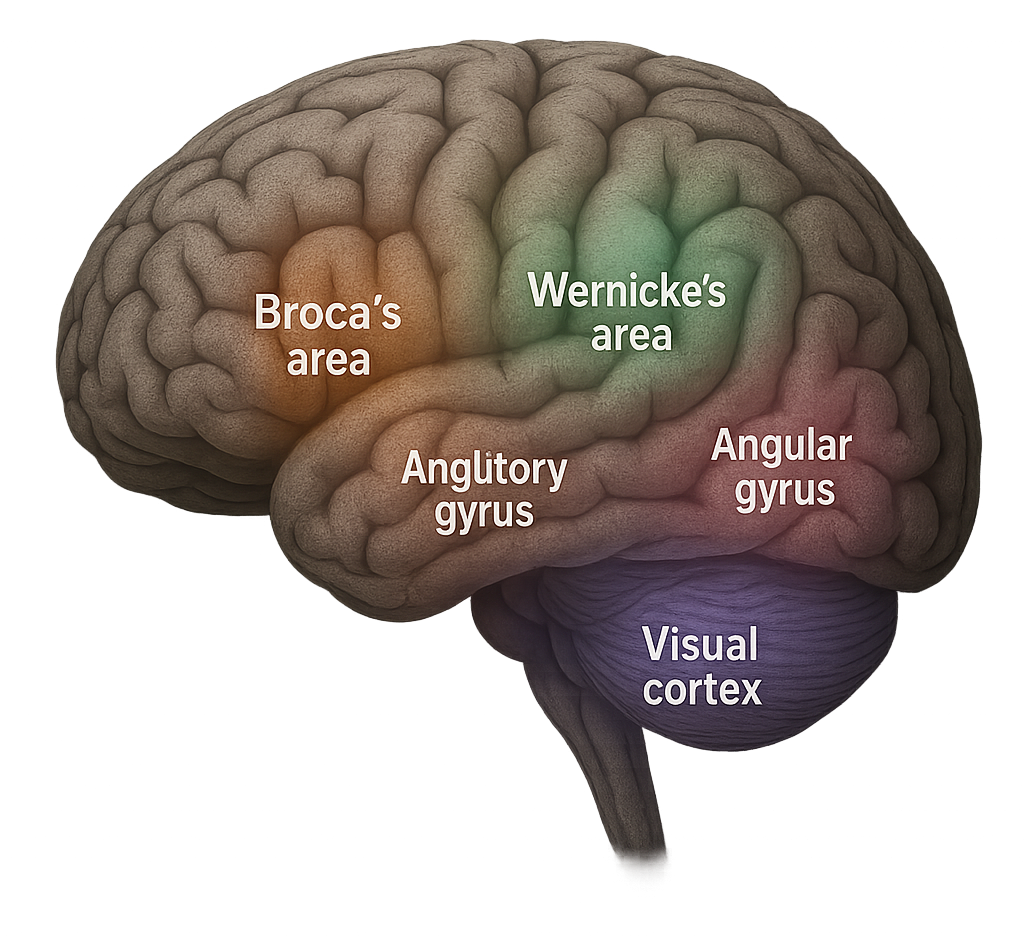

How Brains Do Language vs. How Machines Do It

Spoiler, quite a lot of jargon here. But I hope you get the idea, that being a human is far more complex than a artificial intelligent model.

When humans use language, our brains light up in complex, deeply connected ways. Broca’s area helps us form sentences. Wernicke’s area makes sense of meaning. The angular gyrus ties words to what we’ve seen, felt, and lived. The auditory and visual cortexes feed in raw sights and sounds so we can ground words in reality.

Language, for us, isn’t just text. It’s experience. It’s memory. It’s our bodies carrying scars, joy, and emotions. When I say “home,” I don’t just say four letters; I feel my grandmother’s kitchen, I smell the soup on the stove, I remember the quiet safety of childhood evenings. That’s what meaning looks like.

LLMs don’t have that. If they had “brain parts,” they’d mimic a sliver of Broca’s and Wernicke’s functions: stringing words together, predicting which ones fit. But they don’t know what those words mean. They don’t have memories. They don’t feel. They don’t tie a word to a lived experience. They produce language patterns that look human, but with no depth behind them.

And all you ear about certain people saying their models are intelligent is at best not true and very far fetch…

That’s why I always tell people: they’re not thinking. They’re just guessing.

No Magic. Just Math.

Strip away the marketing gloss and here’s what an LLM really is: a gigantic parrot that has read almost the entire internet. It doesn’t study meaning. It studies probabilities. It learns that when someone writes, “How are you…” the next word is usually “today” or “doing.”

Once trained, it becomes a supercharged autocomplete. You prompt it, and it predicts what words are most likely to come next based on everything it has seen before. That’s it. No insight. No creativity in the human sense. Just math and pattern-matching at an inhuman scale.

And yet sometimes the results are stunning. Which is why this conversation matters.

The Upside We Can’t Ignore

These models can do incredible things. They can draft an essay, a business plan, or a poem in seconds. They can summarize dense reports into something you can actually digest. They can translate across languages with fluency that puts old translation apps to shame.

In engineering, they can explain Kubernetes better than some official documentation I’ve had to slog through. They can spit out boilerplate code at a pace no intern can match. And in conversation, they can hold a rhythm that feels eerily natural closer to how we actually talk than any chatbot we’ve built before.

That’s the draw: speed, fluency, access. It’s intoxicating.

The Flaws Nobody Wants to Talk About

But here’s what people gloss over. LLMs don’t actually know anything. They can hallucinate confidently inventing sources, commands, or facts that don’t exist. They mirror the biases of the internet they’re trained on, which means racism, sexism, and manipulation creep into the system. They have no concept of ethics, consequences, or values. They’re locked in time frozen at their last training cutoff unless retrained.

And yet because they sound fluent, we project intelligence onto them. Our brains are wired to equate language fluency with understanding.

That’s the danger: we trust them more than we should. And when we start outsourcing judgment to them, exploitation isn’t far behind.

The Danger of Commodifying Human Intelligence

This is probably something we don’t talk about enough.

This is not a Outlook, Excel or any other tool, with AI things will change…

Sometimes I get the feeling we’re getting AI completely wrong. The commercial versions millions of people rely on aren’t designed with us or for us in mind. If they were, we wouldn’t be facing ethics issues, manipulations, lies, and systemic flaws that touch millions every day.

What troubles me most is the push to commodity human intelligence to package human labor, to replicate tasks traditionally performed by people, to reduce us to mere outputs. It feels exploitative.

It raises serious questions about dignity, agency, and the social impact of the systems we’re building.

We should be debating these questions, questioning the frameworks, and examining the incentives behind AI deployment not just glorifying the next model’s capabilities. Otherwise, we risk letting technology define humanity on someone else’s terms.

My Technical Experience

Let me get concrete. I’ve watched these tools amaze and betray people in equal measure.

In engineering contexts, I’ve had an LLM explain a system more clearly than a senior engineer could. Minutes later, I’ve seen it invent Kubernetes commands out of thin air commands that would send a junior engineer straight into a wall. I’ve seen it generate code in seconds, only to burn hours debugging the subtle errors it introduced.

The lesson I’ve taken: treat these systems like brilliant interns. They’re fast. They’re confident. They’re often dazzling. But you’d be reckless to ship their work without review.

My Personal Experience

As a coach, I’ve used LLMs in ways that genuinely help people. I’ve had them roleplay tough workplace conversations so clients can rehearse. I’ve used them to generate prompts for reflection when someone feels stuck. I’ve asked them to translate dense, academic writing into something an exhausted engineer can actually process after a 12-hour shift.

But I’ve also seen the downside. People hand over too much. They let the AI write their CVs, cover letters, interview answers. And the result? Bland, beige sludge. Generic phrases that could have come from anyone, with no personality, no sharp edge, no human messiness.

That’s my biggest worry: that we’ll outsource our voice to machines until our individuality gets watered down into corporate AI paste.

Why We Must Question More

The narratives around LLMs are written by the companies building them. And those narratives serve their interests, not ours. They tell us these tools are intelligent. They tell us they’re inevitable. They tell us they’ll replace jobs but also create new ones. They tell us they’ll democratize knowledge.

Some of that may be true. Much of it is spin.

Here’s what they don’t emphasize: the staggering energy consumption. The training data scraped without consent. The reinforcement of power structures that already profit from our labor and attention.

So when you use one, stop and ask: Who benefits if I trust this? What power structures does this reinforce? Am I thinking less because of this? Is my voice being erased in the process?

We don’t need blind adoption. We need critical adoption.

What About the Data?

We’ve built a culture that treats data like gospel pure, objective, and beyond question. But that’s a dangerous illusion.

Data is always shaped by who collects it, how it’s framed, and crucially, what gets left out. LLMs trained on vast datasets inherit every bias, distortion, and agenda embedded in that data.

A 2025 UNESCO study found AI models disproportionately associate women with "home" and men with "executive" roles embedding outdated stereotypes.

Research shows AI resume-screening tools favor white male names over Black or female names, perpetuating systemic inequality.

If we stop questioning AI output and treat it as unquestionable fact, we hand unchecked power to those who control the data—enabling them to bend reality at scale.

History warns us control over information is control over people. With AI, that control is unprecedented and urgent to scrutinize.

What Comes Next

Technically, these models will keep improving. They’ll get slicker. They’ll fake memory. They’ll specialize for industries like law, medicine, or finance. They’ll embed themselves into more of our tools and workflows until they’re hard to avoid.

And yes, you will more and more companies using AI in almost all their services, some will replace people for costs sake.

But this is a great reminder, that tells you that companies that behave like this, don’t really care about people, plain and simple.

But don’t confuse fluency with understanding. These aren’t steps toward human-like intelligence. They’re steps toward better mimicry. Artificial General Intelligence isn’t around the corner. What we’re building is something different: a system that’s shockingly good at dressing up as intelligent without ever crossing that line.

AI’s growth isn’t just technical it’s political.

When I look at today’s AI industry, I don’t just see innovation I see extraction.

The way big tech companies scoop up data from across the globe feels uncomfortably familiar. It mirrors some of history’s darkest chapters colonialism and imperialism. Back then, powerful nations stripped resources from others with little regard for ownership, fairness, or long-term harm. Today, it’s not gold, oil, or land being taken it’s data. Our conversations, our culture, our collective digital labor are being absorbed, repackaged, and sold back to us.

The story is the same: the powerful profit, the rest are sidelined.

Large language models (LLMs) don’t just “predict text.” They impose dominant cultural narratives. They often amplify the voices of those who already have power while drowning out diverse perspectives. The subtlety of it makes it even more dangerous because the bias doesn’t scream at you. It seeps in quietly, shaping what we read, how we think, and what we consider “normal.”

Meanwhile, surveillance tools and AI-driven control systems disproportionately impact marginalized communities. These technologies don’t arrive in a vacuum—they reinforce old patterns of oppression, just in shinier, more automated ways. Facial recognition, predictive policing, algorithmic bias in hiring these aren’t bugs, they’re consequences of an industry that prioritizes scale and speed over justice and dignity.

And here’s the uncomfortable truth: this isn’t just about the technology. It’s about the business model. AI companies race to build bigger, faster, “smarter” models, but the incentives are always tilted toward investment, dominance, and market capture not ethics, transparency, or long-term human benefit. The narrative is designed to make us believe this is inevitable that the only path forward is to trust them, hand over more data, and accept the trade-offs.

But inevitability is a myth.

The future of AI is still being written.

Around the world, researchers, activists, and even some business leaders are pushing for a different path. One rooted in fairness, accountability, and inclusion. One that respects sovereignty of data, of communities, of cultures. One that shares the benefits of AI rather than concentrating them in the hands of a few corporations in Silicon Valley, Seattle, or Shenzhen.

We’ve seen what happens when technology scales without guardrails. Social media promised connection but left us polarized. The gig economy promised flexibility but left millions with precarity. Do we really want to repeat the same cycle with AI only bigger, faster, and more irreversible?

I don’t.

The fight against digital imperialism has already started. The question is whether we’ll sit on the sidelines or take part in shaping it. For me, that means asking harder questions of the tools I use, the companies I trust, and the systems I help build. It means staying skeptical of hype while staying open to possibility. And it means pushing for leadership ethical leadership that sees AI not as a quick profit grab but as a tool to improve lives, to expand creativity, and to bring people closer together instead of dividing them.

The Bottom Line

Large language models are powerful. They save time. They break barriers. They spark creativity.

But they are not minds. They are not colleagues. They are not leaders. They are tools extraordinarily advanced tools, yes, but still tools.

The real danger isn’t in using them. The danger is in forgetting what they are, in handing them our judgment, our values, and our voices without question. And how low we are putting ourselves when comparing to these models. And I feel sometimes is what big tech want us to feel like, so they can point at us and say we are the problem, and they the solution. And that for me is not what technology should be about.

And by the way LLMs are not intelligent, it still a brute-force, a different kind but nonetheless brute-force. It’s an insult to humanity saying LLMs are intelligent.

So here’s my plea: use them, experiment with them, push them to their limits. But stay skeptical. Stay human. Stay awake.

Because the intelligence that matters isn’t inside the model. It’s inside us how we choose to use it, how we question it, and how we refuse to surrender the messy, imperfect humanity that no machine will ever replicate.

About the Author

Tino Almeida is a tech leader, coach, and writer reshaping how we think about leadership in a burnout-driven world. With over 20 years at the intersection of engineering, DevOps, and team culture, he helps humans lead consciously—from the inside out. When he’s not challenging outdated norms, he’s plotting how to make work more human—one verb at a time.

I believe you have just confirmed my thoughts on LLM. I do worry what info/stuff they are absorbing and context - or lack thereof. The business model is a concern and the power consumption/water cooling magnitude also a concern. If/when AI might be substituted for a 'professionals' input, what might be the legal ramifications. Standards are built into professional associations to govern behaviour of professionals. Will frequent users of AI loose their critical thinking ability?

Here are some simple, easy educational resources that can help those who want to understand AI technology better.

Video 1: “You Don’t Understand AI Until You Watch This”

https://www.youtube.com/watch?v=1aM1KYvl4Dw

Video 2: “ChatGPT Explained Completely”

https://www.youtube.com/watch?v=-4Oso9-9KTQ

-Fun Tools-

The Transformer Explainer: https://poloclub.github.io/transformer-explainer/

Teachable Machine: https://teachablemachine.withgoogle.com/