#5 - How teams can take advantage of AI agents

It's all about improving communication between teams

I want to talk about something that’s been on my mind a lot lately how teams can actually use AI agents in a way that makes sense, without losing what makes us human. I’ve spent years working in tech, leading teams, and trying to figure out how to make tools work for people, not the other way around. And let me tell you, AI especially these new AI agents can be a game-changer if we use them right.

But if we don’t, they can just add more noise, more confusion, and more distance between people who should be working together.

I fear many companies would see AI, as a replacement for cheaper workforce, the same way companies outsource workforce from other countries where labor is cheaper, nothing wrong with that, but it creates division and a blame culture. No one likes to push a company to new levels of automation, adoption of certain work standards, and such. And in the end we receive a thank you letter and a redundancy package.

1. Custom AI agents as “team experts”

Let’s talk about what these AI agents actually are. They’re not magic. They’re not replacements.

They’re tools that can be shaped to fit the way your team works.

Domain-Specific Agents

Every team has its own way of doing things its own knowledge, its own history, its own quirks.

DevOps teams have their logs and deployment strategies.

Security teams have their compliance rules and risk assessments.

Product teams have their user insights and roadmaps.

Instead of using a generic AI tool that doesn’t get your team, why not train an agent to specifically understand your team’s world?

For example:

A DevOps agent could analyze monitoring logs, suggest fixes based on past incidents, and even draft runbooks. But here’s the key, the agent doesn’t make the final call. It suggests, and a human approves. That way, the team stays in control, but the agent helps them move faster.

A Security agent could offer a “Check for Compliance” service that Product teams can use before launching a new feature. No more waiting for a security review to be scheduled just ask the agent for a quick sanity check.

Self-Service for Other Teams

One of the biggest wastes of time in any company is the back-and-forth between teams. “Hey, can Security review this?” “DevOps, what’s the status on this deployment?”

With custom AI agents, teams can expose certain capabilities like a Slack command or an internal API so other teams can get answers without waiting for a meeting or an email response.

Human Decision Gate

This is non-negotiable, AI should inform, but humans should decide. Always. No exceptions. If an agent suggests a fix, a human review it. If it flags a risk, a human assesses it.

The goal isn’t to remove people from the equation it’s to give them better information so they can make better decisions.

2. AI as a force multiplier for collaboration

AI agents shouldn’t just be about getting things done faster. They should help teams work together better.

This idea of pushing hundreds of lines a second, only create a huge cognitive overload and more things to test and do. Be smart on this one.

Live Documentation & Advisory

How much time do we waste digging through outdated docs or asking, “Wait, how did we set this up again?” An AI agent can keep documentation alive updating API specs, onboarding guides, or architecture notes as the code and discussions evolve.

No more “oops, that wiki page is from 2020.”

Pattern Recognition

Ever feel like you’re solving the same problem over and over? An agent can flag recurring issues like, “Hey, this error looks like the outage we had last month.

Here’s how we fixed it then.” That way, teams spend less time reinventing the wheel and more time actually moving forward.

Process Alignment

AI can also nudge teams toward best practices. Imagine an agent saying, “This change requires a security review should I schedule it for you?” It’s not about policing; it’s about making sure nothing slips through the cracks.

Workflow Integration Map

Here’s where managers come in. You don’t need to control every little thing the AI does, but you do need to define:

Where does AI fit in? (Input: data, Process: analysis, Output: recommendations)

Where do humans step in? (Judgment, ethics, prioritization)

Clarity here prevents chaos later.

3. AI for creative and strategic work

This is where things get interesting. AI isn’t just for grunt work it can help with the big stuff, too.

Idea Generation

Stuck on a problem? An agent can brainstorm new features, architectures, or strategies based on your team’s knowledge and industry trends. But and this is important don’t stop there.

Imagination Safeguard

After the AI spits out ideas, the team should run a divergence session no AI allowed. Generate your own ideas, compare them to the AI’s suggestions, and ask: Which ones feel original? Which ones are actually feasible? This keeps the team’s creativity sharp.

Risk Assessment

Before rolling out a big change, an agent can simulate outcomes or flag potential risks. “This change might conflict with Marketing’s campaign should we sync with them first?” It’s like having a second set of eyes that never gets tired.

Creativity Retention

Here’s a rule I swear by: Hold “AI-free” ideation workshops every quarter. No tools, no agents, just people thinking and creating together. It keeps those intuitive, creative muscles strong.

How Managers Can Support Teams (Without Micromanaging AI)

Managers, this one’s for you.

Your job isn’t to control how teams use AI it’s to set them up for success.

1. Set Guardrails, Not Handcuffs

Define boundaries: What can the agent do? (Draft code? Sure.) What’s off-limits? (Deployment decisions? Absolutely.)

Ownership model: Assign an “AI steward” in each team someone who handles training, updates, and audits. Keep accountability human.

Ethics & bias review: Regularly check the agent’s outputs for accuracy, fairness, and alignment with your company’s values.

2. Foster a Culture of Trust and Curiosity

Encourage experimentation: Let teams test agents in low-risk areas first like documentation or code reviews, before scaling up.

Transparency: Make sure everyone knows how their agent works what data it uses, what it can’t do. No black boxes.

Feedback loops: Hold monthly “AI Learnings” sessions. What worked? What failed? Keep the conversation going.

3. Focus on Outcomes, Not Oversight

Metric-driven autonomy: Don’t measure how much the team uses AI. Measure the impact, like faster onboarding, fewer bugs, or happier teams.

Cross-team audits: Have Security review DevOps’ agents. Have Product check Data’s insights. Fresh eyes catch blind spots.

Skill preservation: If human creativity or problem-solving starts to decline, dial back the AI. The goal is to enhance skills, not replace them.

4. Invest in “AI Literacy”

Training: Run workshops on prompt design, agent tuning, and ethical use. Everyone should know how to work with AI, not just how to turn it on.

Documentation: Encourage teams to document their agent’s strengths, weaknesses, and “personality.” “Our agent is great at optimization but overly cautious on risk.”

Skill alignment: AI should reinforce human skills critical thinking, collaboration, ethics not automate them away.

Custom AI agents as a service

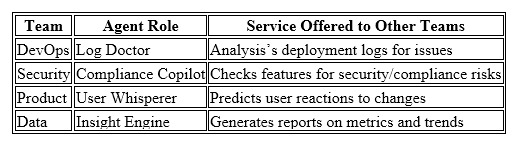

Let’s make this concrete. Here’s how it could work in a real team:

How it works:

Teams train their agents with their own data and expertise.

Other teams interact with them via Slack, APIs, or Jira plugins.

Agents provide insights, but humans make the final call.

Key mindset shifts

To make this work, we need to change how we think about AI:

From “AI as a Tool” to “AI as a Teammate”: Treat agents like tools that guide them, critique them, help them improve.

From “Control” to “Collaboration”: Managers should empower teams to shape their AI, not police it.

From “Siloed Expertise” to “Shared Intelligence”: Agents can bridge knowledge gaps between teams, reducing friction and duplicate work.

New dimensions for human-centered adoption

Finally, let’s talk about what really matters:

Human Decision Gates: No matter what, people make the final calls.

Imagination Calibration: Regularly compare human ideas vs. AI ideas. Keep your team’s creativity alive.

Wellbeing & Engagement Metrics: Track how AI use affects motivation and collaboration. If people feel replaced or stressed, adjust.

Visual Workflow Maps: Make it clear where AI supports, where humans decide, and where skills grow.

A question for you

So, here’s what I want to know.

What’s the first mission you’d assign a custom AI agent?

Log analysis?

Documentation updates?

Security scanning?

Creative ideation?

Or is there some recurring bottleneck in your team that you’d rather automate first?

Let’s start small, learn fast, and keep the human element at the center.

Because at the end of the day, that’s what this is all about.

Not replacing people empowering them.

About the Author

Tino Almeida is a tech leader, coach, and writer reshaping how we think about leadership in a burnout-driven world. With over 20 years at the intersection of engineering, DevOps, and team culture, he helps humans lead consciously from the inside out. When he’s not challenging outdated norms, he’s plotting how to make work more human, one verb at a time.

I want to agree and disagree. I want to be contrarian about this. I think there is a place for human-in-the-loop. I strongly agree that AI should work beside you. But, it seems what you are suggesting introduces something people forget is a negative. That thing is human bias. Many decisions humans make are based on wrong information, maybe someone’ opinionated Reddit post. They get tired. And relegated to the job of pushing the yes key or the no key has belittled humanity. People are worried that AI will steal our jobs and we need a place in this scenario. Luddite thinking. AI is and will continue to steal the jobs we created it to do better than us. Here is a typical scenario. AI can read medical imaging far better than a radiologist. This is just now fact. But a doctor must sign off on the results? Why? It’s not because the doctor will ever say, no, that’s a peanut not a tumor. It’s because insurance companies want a human to say, I signed this. Culpability. Does AI make mistakes? Yes. Far less than humans. An AI will never treat a woman differently from a man, or a black person different from a white person. It won’t make decisions based on greed. So, partner yes. Relegate humans to be approvers, absolutely not.